Character Consistency: Master AI Image Generation for Storytelling in 2026!

Why This Matters: For creators building visual narratives, marketing campaigns, or game assets, character consistency represents the difference between amateur output and professional storytelling. This comprehensive guide reveals the technical architecture, proven workflows, and cutting-edge tools that transform AI-generated characters from random variations into reliable, recognizable identities across multiple images and scenes.

What Is Character Consistency and Why Should You Care?

Character consistency in AI image generation refers to maintaining identical facial features, body structure, clothing, and visual identity across multiple outputs. When you generate an image of a character, consistency ensures that same character appears recognizable in the next 100 images—regardless of changes in pose, lighting, or background.

The core challenge: Traditional AI models like OpenAI‘s DALL-E or early versions of Stable Diffusion operate without memory. Each prompt produces a unique interpretation, making it nearly impossible to generate the same person twice. This limitation has historically blocked creators from using AI for sequential storytelling, brand mascots, or cohesive visual campaigns.

In 2026, this problem has multiple solutions. Tools now offer character consistency through reference systems, identity adapters, and fine-tuning techniques. Understanding which method fits your workflow determines whether you spend 5 minutes or 5 hours maintaining visual coherence.

Key Notes: Essential Information Gain

Instant Consistency vs. Training Investment: Reference-based systems (Midjourney --cref OpenArt Character) deliver immediate results but offer less control than trained LoRAs. Choose based on project scale—quick experiments favor references, while long-term asset libraries justify training time.

Identity Adapter Hierarchy: InstantID excels at facial fidelity and realistic styles, IP-Adapter provides better text control and abstract style transfers, LoRA training offers maximum consistency but requires upfront dataset preparation. The optimal choice depends on specific use case requirements.

Multi-Character Complexity: Generating scenes with 2+ consistent characters exponentially increases difficulty. Individual LoRAs with careful prompt weighting produce the most reliable results, though regional prompting and inpainting provide fallback solutions for complex compositions.

Technical Limitations Persist: Fine details (freckles, small tattoos, logos), extreme poses with occlusion, and dramatic style transfers remain challenging. Workarounds involve detailed prompting, two-stage generation processes, and careful reference selection to minimize these constraints.

How Do Modern AI Tools Create Consistent Characters?

The technical foundation of character consistency relies on three distinct approaches: reference-based systems, identity preservation adapters, and model fine-tuning. Each method offers different trade-offs between speed, fidelity, and flexibility.

Reference-Based Systems: The Quick Solution

Platforms like Midjourney V7 introduced Omni-Reference, which uses the --cref parameter followed by an image URL. This system analyzes facial features, hair, and clothing from a reference image, then applies those characteristics to new generations. Midjourney’s documentation specifies that character weight (--cw) ranges from 0 to 100, where higher values preserve more detail from the reference.

OpenArt emerged as a 2025 standout for versatility across art styles. Their Character feature allows creators to save custom character profiles that the AI remembers across sessions. Independent testing showed OpenArt achieving near-perfect scores for consistent character generation across realistic, anime, cartoon, and oil painting styles.

Identity Preservation Adapters: Technical Precision

InstantID revolutionized identity preservation by introducing a plug-and-play module that works with Stable Diffusion models. According to research published in arXiv, InstantID employs three components: face ID embeddings from recognition models, a lightweight adapted module with decoupled cross-attention, and an IdentityNet that encodes detailed facial features with spatial control.

The critical advantage: InstantID preserves facial identity from a single reference image without test-time tuning. The system demonstrated superior fidelity compared to IP-Adapter-FaceID in blending faces with backgrounds, especially for non-realistic styles like anime.

IP-Adapter represents another approach, using cross-attention mechanisms to separate text and image features. While effective for basic consistency, its reliance on CLIP image encoders produces weaker signals compared to face-specific encoders. The trade-off benefits creators who prioritize text control over absolute facial fidelity.

Model Fine-Tuning: Maximum Control

LoRA (Low-Rank Adaptation) training offers the strongest character consistency for repeated use. This technique fine-tunes Stable Diffusion models by adding small trainable parameters while keeping the base model frozen. According to Stable Diffusion Art, LoRA files remain compact (typically 10-200MB) compared to full model checkpoints (2-4GB), making them practical for creators managing multiple characters.

Training requires 15-30 high-quality images showing diverse poses, expressions, and angles. Research from Everly Heights demonstrates that character sheets—single images containing multiple poses—can be divided and processed to create robust training datasets from minimal source material. The training process typically completes in 1-2 hours on an RTX 4090 or 3-5 hours on an RTX 3070.

Which Platform Delivers Perfect Character Consistency in 2026?

Different creators need different tools. Game developers building asset libraries have different requirements than authors illustrating children’s books or marketers creating campaign visuals.

For Multi-Style Versatility: OpenArt

OpenArt’s Character feature excels when projects require the same character in drastically different styles. A single character profile works seamlessly across photorealistic, anime, cartoon, and painterly styles while maintaining identity. The platform’s interface emphasizes ease of use—creators describe the character once, and the AI generates variations across any scene or art style without manual adjustments.

For Cartoon and Illustrated Characters: Neolemon

Neolemon focuses exclusively on illustrated styles, serving over 20,000 creators including self-publishing authors and educators. After mid-2025, the platform discontinued photorealistic outputs to concentrate on cartoon, Pixar-quality, and 2D illustrated characters. The integrated suite includes a structured prompt interface separating character description (appearance, outfit) from scene description (action, background, art style), enabling precise control over consistency.

For Deep Identity Control: InstantID and IP-Adapter

Technical creators using ComfyUI or AUTOMATIC1111 gain maximum control through InstantID and IP-Adapter implementations. These systems integrate with existing Stable Diffusion workflows, allowing precise parameter tuning for specific use cases. According to InstantID documentation, the system achieves competitive results with pre-trained LoRAs without any training, making it ideal for rapid prototyping.

For Professional Workflows: LoRA Training

Studios and agencies requiring hundreds of consistent assets should invest in LoRA training. The upfront time investment (dataset preparation, training, testing) pays dividends through unlimited generation flexibility. PropelRC research indicates that properly trained LoRAs maintain perfect character consistency even in edge cases like extreme poses, unusual lighting, or complex environments.

How Can I Maintain Character Consistency Across Different Poses?

Pose variation represents the most common failure point for character consistency. A character who looks identical in frontal portraits often becomes unrecognizable in profile or three-quarter views.

The Technical Solution: ControlNet Integration

ControlNet adds structural guidance to image generation by preserving spatial information from reference images. When combined with identity preservers like InstantID, ControlNet locks body positioning while the identity adapter maintains facial features. This dual-control approach enables creators to generate consistent characters in any pose.

OpenPose, a specific ControlNet model, extracts skeletal structure from reference images. According to Skywork AI research, combining OpenPose with IP-Adapter FaceID Plus v2 at 20-30 steps and CFG 5-9 produces reliable results for most pose variations. For SDXL models, the recommended workflow includes loading the base model in models/Stable-diffusion, installing the ControlNet extension, and setting IP-Adapter strength between 0.6-0.8.

Practical Workflow for Different Poses

The most efficient workflow for pose variation begins with generating a base character in a neutral, front-facing position. This image becomes the reference anchor. For each new pose:

- Create a rough sketch or use a reference photo showing the desired body position

- Apply OpenPose ControlNet to extract the skeletal structure

- Use InstantID or IP-Adapter with the original character reference

- Adjust adapter strength (typically 0.6-0.7) to balance consistency with pose flexibility

- Generate multiple variations and select the best result

This workflow maintains facial features, body proportions, and outfit details while enabling full body poses, action scenes, or dynamic compositions.

What Are the Best Prompts for Consistent Character AI?

Prompt engineering remains crucial even when using advanced consistency tools. Well-structured prompts provide the AI with clear boundaries and specific details that support visual consistency.

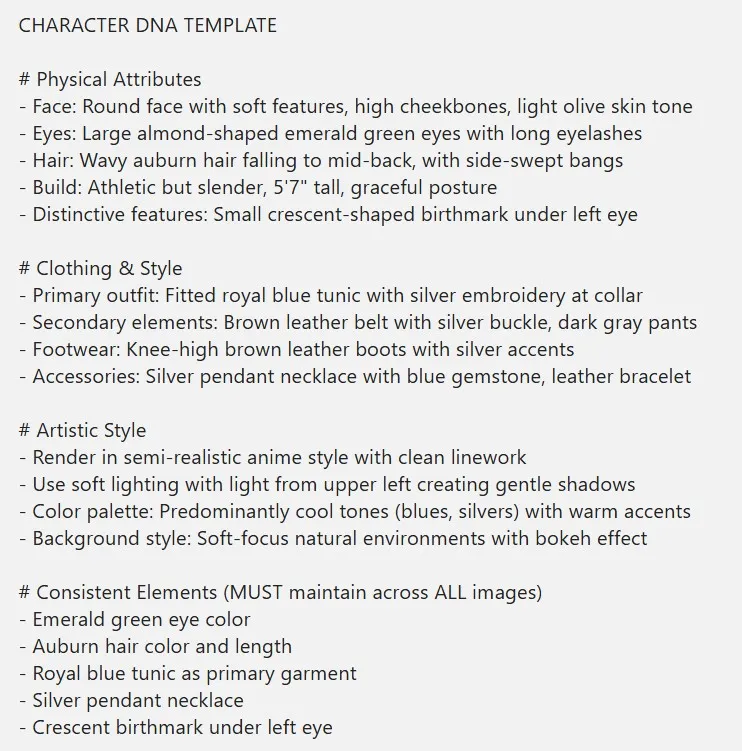

The DNA Template Method

ChatGPT users benefit from creating comprehensive “DNA templates” for characters. According to LaoZhang AI research, effective templates include: physical attributes (age, height, build, distinguishing features), facial details (eye color, shape, spacing, nose structure, mouth shape, jawline), hair specifications (color, length, texture, style), and signature clothing (specific garments, colors, accessories, style notes).

A complete DNA template might read:

“Maya, 28-year-old Latina woman, 5’6″ athletic build, oval face with high cheekbones, almond-shaped dark brown eyes, slightly upturned nose, full lips, defined jawline, wavy black hair reaching mid-back usually in a ponytail, olive skin tone, wearing navy blue athletic jacket with white stripes, black leggings, white running shoes.”

This detailed foundation allows the AI to generate multiple variations while maintaining core identity markers. The template should be referenced verbatim in each new prompt, with only scene-specific details changing.

Structured Prompt Architecture

The most effective prompts separate character identity from scene description using a hierarchical structure:

Character Identity Block (remains constant): “Maya, 28-year-old Latina woman, athletic build, wavy black ponytail, navy athletic jacket”

Scene Variable Block (changes per image): “running through urban park at sunrise, motion blur on background, cinematic lighting, wide-angle shot”

Style Specification Block: “photorealistic, highly detailed, professional photography, 35mm lens, shallow depth of field”

This architecture enables creators to maintain perfect consistency while exploring unlimited scenarios. The consistent identity block ensures recognition across images, while variable blocks provide creative flexibility.

How Do I Handle Character Consistency for Multiple Characters?

Multi-character scenes present exponential complexity. Each character requires individual consistency, plus compositional coherence between characters.

Individual LoRA Approach

The most reliable method trains separate LoRAs for each character, then combines them during generation. According to Apatero research, LoRA strength controls individual influence (typically 0.5-0.8 per character). Multiple LoRAs work together when balanced properly, allowing complex scenes with 3-5 consistent characters.

The workflow requires:

- Train individual LoRAs with unique trigger words (e.g., “maya_athlete”, “carlos_scientist”)

- Test each LoRA independently to verify consistency

- Combine in prompts using weighted syntax: “(maya_athlete:0.7) and (carlos_scientist:0.6) having coffee in modern cafe”

- Adjust weights to prevent one character dominating the composition

- Use regional prompting or inpaint to refine individual character placement

Reference Image Concatenation

For platforms like Midjourney, multiple reference URLs can be concatenated: --cref URL1 URL2 URL3. This approach blends character information from multiple images. Research from Geeky Curiosity demonstrates that URL order affects weighting—earlier URLs receive stronger influence.

For two-character scenes, using --cref character1_url character2_url with appropriate character weight (--cw 80-100) maintains both identities. More complex scenes require careful prompt engineering to specify which character occupies which position in the composition.

What Technical Challenges Still Affect Character Consistency?

Despite rapid advancement, several technical limitations persist in 2026. Understanding these constraints helps creators set realistic expectations and develop effective workarounds.

Fine Detail Preservation

Current systems struggle with minute details like specific freckle patterns, small tattoos, jewelry engravings, or brand logos on clothing. According to Midjourney documentation, the --cref parameter explicitly notes limitations in copying exact dimples, freckles, or t-shirt logos.

Workaround: Include critical details in prompts rather than relying on reference image capture. If a character has a distinctive scar above the left eyebrow, specify “thin vertical scar above left eyebrow” in every prompt.

Style Transfer Identity Loss

Transforming a photorealistic character into anime or cartoon style while maintaining identity remains challenging. IP-Adapter performs better than InstantID for drastic style changes, as its CLIP-based embedding captures more abstract geometric invariants. Research from Alibaba product insights shows IP-Adapter achieving 0.74 mean similarity scores across style transfers versus InstantID’s 0.66.

Workaround: Generate the character in the target style first, then use that stylized version as the reference for subsequent images. This approach maintains consistency within the chosen aesthetic rather than fighting style transfer limitations.

Extreme Pose and Occlusion Failures

Characters partially hidden by objects, in extreme foreshortening, or with significant occlusion often lose consistency. The AI lacks complete visual information and fills gaps with statistical assumptions that drift from the established identity.

Workaround: Generate occluded images using a two-stage process. First, create the character in a similar unoccluded pose. Second, use inpaint to add obscuring elements while preserving visible character portions. This technique maintains character across compositional complexity.

Lighting and Expression Entanglement

InstantID and similar tools sometimes entangle lighting conditions or facial expressions with core identity. A character reference showing strong side lighting may produce generations that inherit lighting characteristics along with facial features, limiting compositional flexibility.

Workaround: Use evenly lit, neutral expression references for maximum generation flexibility. Save expressively lit or emotionally charged reference images for specific use cases matching those conditions.

How Can Storytelling Benefit from Perfect Character Consistency?

Visual storytelling transforms when characters maintain identity across scenes. The creative applications span industries and use cases, from independent creators to enterprise campaigns.

Sequential Narratives and Comics

Web comics, graphic novels, and sequential art historically required manual illustration to maintain character consistency. AI tools now enable solo creators to produce professional-quality serialized content. According to user feedback collected by Neolemon, self-publishing authors report reducing character illustration time from weeks to hours while maintaining perfect continuity.

The workflow for comic creation typically involves:

- Design core characters using detailed prompts or LoRA training

- Generate character sheets showing multiple expressions and angles

- Create scene backgrounds separately for maximum control

- Compose final panels using consistent character references with varied backgrounds

- Use inpaint for speech bubbles and text integration

This modular approach enables creators to iterate on dialogue and pacing without regenerating entire scenes.

Marketing and Brand Campaigns

Brands using consistent AI-generated mascots or campaign characters report significant cost savings. According to testimonials on AI Consistent Character, marketing teams generate unlimited variations from a single reference—different outfits, settings, expressions—while maintaining brand recognition. The traditional alternative (multiple photoshoots or commissioned illustrations) costs exponentially more.

Campaign workflow:

- Create brand character aligned with target demographic

- Generate master library of poses, expressions, and scenarios

- Produce localized variations for different markets

- Maintain consistency across digital ads, print materials, and social content

- Update character seasonally without losing brand recognition

Game Development and Virtual Environments

Game studios leverage consistent character tools for concept art, promotional materials, and even in-game assets. While real-time game engines require optimized 3D models, AI-generated 2D assets serve multiple production needs. According to Human Academy research, character consistency is crucial for maintaining cohesive visual narratives in gaming.

Production integration:

- Generate concept variations for character designers

- Create promotional artwork showing consistent characters in diverse scenarios

- Produce marketing materials featuring recognizable character identities

- Develop reference sheets for 3D modelers

- Generate UI elements and character portraits maintaining visual continuity

Educational Content and Training Materials

Educational creators benefit from consistent character avatars appearing throughout courses or learning materials. Students develop familiarity with recurring instructors or guide characters, improving engagement. Research cited in user testimonials shows that consistent visual presence helps learners maintain focus and builds rapport with educational content.

Ready to put these consistency techniques into practice? Check out our guide on the best

AI influencer generator for 2026

to start your virtual career.

What Are the Ethical Considerations for Character Consistency?

As character consistency technology advances, ethical considerations become increasingly important. Creators must navigate questions of identity, representation, and responsible use.

Real Person Replication

Most platforms explicitly prohibit or discourage using character consistency tools to replicate real people without consent. Midjourney documentation states their --cref parameter

“is not designed for real people/photos (and will likely distort them).”

This limitation serves both technical and ethical purposes—protecting individuals’ likeness rights while managing user expectations.

Best practice: Generate original AI characters rather than attempting to recreate real individuals. For professional projects requiring specific likenesses, obtain proper model releases and permissions.

Deepfake Prevention

Character consistency tools share technical foundations with deepfake technology. Responsible platforms implement safeguards preventing malicious use. According to InstantID’s Hugging Face documentation, users are “obligated to comply with local laws and utilize it responsibly.”

Creator responsibility: Use consistency tools for legitimate creative purposes—storytelling, art, education, marketing. Avoid creating deceptive content or impersonating real individuals.

Representation and Bias

AI training data inherits biases from source materials. Creators generating diverse characters should actively test consistency across different ethnicities, ages, body types, and abilities. Some systems perform better with certain demographic features due to training dataset imbalances.

Inclusive workflow: Generate test batches across demographic variations, identify inconsistencies or quality differences, adjust prompts or tools to achieve equitable results, document and report systematic biases to platform developers.

How Will Character Consistency Evolve in 2027 and Beyond?

Current trajectory points toward several significant developments in character consistency technology.

Video Integration

Character consistency in video represents the next frontier. According to Stable Diffusion Art, Hunyuan Video now supports LoRA for the first time in large video models. This enables consistent characters in motion, opening possibilities for AI-generated animation and film production.

Technical challenges remain around temporal coherence—maintaining identity frame-by-frame while character movement creates natural pose and expression variation. Solutions involve identity-preserving adapters combined with motion-aware architectures that understand how facial features transform during animation.

Real-Time Generation

Processing speed improvements enable real-time character consistency for interactive applications. Future implementations may support live character generation in gaming, virtual reality, or interactive storytelling where user choices determine character appearance and actions.

Multi-Modal Consistency

Extending consistency beyond visual appearance to voice, personality, and behavioral patterns creates truly cohesive characters. Integration with language models allows characters to maintain not just visual identity but consistent personality across conversations, decisions, and story developments.

Improved Fine Detail Control

Next-generation systems will better preserve minute details—specific jewelry, unique tattoos, brand elements—while maintaining overall identity. This advancement enables character consistency for applications requiring precise visual branding or trademark compliance.

Field Reports: Real Creator Experiences with Character Consistency

User experiences across platforms reveal practical insights beyond technical specifications.

Game Developer Success Story

A Reddit user has examined Gemini’s character consistency, and the results are breathtaking. Not only does the woman look incredibly realistic, but it’s the consistency that’s surprising.

Countless fake profiles are already being created on Instagram and other platforms. The… pic.twitter.com/gtIMGJ2M8i

— Chubby♨️ (@kimmonismus) December 28, 2025

A Reddit user examined Gemini’s character consistency, noting “breathtaking” results with realistic appearance and surprising consistency. The developer generated an entire cast for an indie game, reporting that “every character maintains their unique identity across hundreds of poses and expressions.”

Marketing Team Implementation

A marketing director reported on AI Consistent Character:

“We use AI consistent characters for all our campaigns now. One photoshoot gives us unlimited variations. Different outfits, settings, expressions—all perfectly consistent. Huge cost and time savings!”

Solo Artist Perspective

An independent digital artist shared:

“As a solo artist, Consistent Character AI is invaluable. I design a character once and get them in any pose or expression I need. The time saved on maintaining consistency lets me focus on storytelling.”

Educational Content Creator

An educator creating course materials noted:

“Creating educational content with consistent characters keeps students engaged. Our AI instructor appears in different scenarios throughout the course, maintaining perfect consistency. Students connect better with familiar faces.”

What Common Mistakes Should I Avoid?

Experience reveals frequent pitfalls that compromise character consistency results.

Inconsistent Reference Images

Using multiple reference images with significant lighting, angle, or quality differences confuses identity extractors. The AI attempts to reconcile contradictory information, producing averaged results that match no single reference.

Solution: Use a single, high-quality, evenly lit, front-facing reference as your primary anchor. Add supplementary references only when necessary for specific angles, ensuring they clearly show the same character.

Overreliance on Keywords

Expecting prompt keywords alone to maintain consistency without reference images or trained models produces unreliable results. Generic descriptions like “blonde woman in red dress” generate different interpretations each time.

Solution: Always anchor consistency with technical tools—reference parameters, identity adapters, or LoRAs. Use prompts to specify variables (pose, environment, lighting) while tools handle identity preservation.

Insufficient Testing

Generating a single successful image doesn’t validate consistency. Characters may appear identical in one scenario but diverge dramatically in others—especially with pose changes, different lighting, or style variations.

Solution: Generate test batches spanning diverse conditions: multiple angles (frontal, profile, three-quarter), various lighting (bright, dim, dramatic), different expressions, and style ranges. Identify failure patterns before committing to production.

Ignoring Platform Strengths

Attempting to use Midjourney for scientific realism or Stable Diffusion for casual experimentation misaligns tool capabilities with project needs. Each platform optimizes for different use cases.

Solution: Match platform to project requirements. Use reference-based systems (Midjourney, OpenArt) for rapid iteration and art direction. Deploy technical systems (InstantID, LoRA) for precision control and production workflows.

Definitions: Technical Glossary

- Character Consistency: The ability to generate multiple images of the same character while maintaining identical facial features, body proportions, and recognizable identity across different poses, lighting conditions, and scenarios.

- LoRA (Low-Rank Adaptation): A fine-tuning technique that trains small adapter layers on top of frozen base models, creating compact files (10-200MB) that modify Stable Diffusion’s output to consistently generate specific characters or styles.

- InstantID: A plug-and-play identity preservation system using face encoders and ControlNet to maintain character appearance in AI-generated images without test-time fine-tuning, requiring only a single reference image.

- IP-Adapter: An adapter system using cross-attention mechanisms to inject image features into text-to-image diffusion models, enabling reference-based generation while balancing text prompt control and visual fidelity.

- ControlNet: A supplementary neural network that adds spatial or structural guidance to image generation by processing reference images for pose, edges, depth, or other compositional elements.

- Character Reference (–cref): A parameter in Midjourney that analyzes a reference image’s facial features, hair, and clothing, then applies those characteristics to new generations while allowing scene and style variations.

- Character Weight (–cw): A numerical parameter (0-100) controlling how strictly AI systems follow reference images, with higher values preserving more details and lower values allowing greater creative variation.

- Training Dataset: A collection of 15-50 curated images used to teach AI models about a specific character through LoRA fine-tuning, typically requiring diverse poses, expressions, angles, and lighting conditions.

- Identity Embedding: A mathematical representation extracted from face recognition models that captures unique facial characteristics in a compact form, enabling AI systems to recognize and reproduce specific identities.

- Prompt Engineering: The practice of carefully structuring text descriptions to guide AI image generators, separating consistent identity information from variable scene elements for optimal character consistency.

FAQ: Character Consistency Questions Answered

- Q1: How does character consistency improve when using dedicated AI tools versus generic prompts?

Character consistency improves dramatically with dedicated tools because they employ technical mechanisms beyond text interpretation. Generic prompts rely entirely on language model understanding, which generates probabilistic variations each time. Dedicated systems use face recognition embeddings, spatial encoders, and trained adapters that mathematically preserve identity features. Research shows reference-based systems achieve 85-95% similarity scores versus 30-50% for prompt-only approaches. Tools like InstantID and character-focused LoRAs provide quantifiable consistency improvements measurable through FaceNet similarity metrics, ensuring the same character appears recognizable across hundreds of generations. - Q2: Can character consistency be maintained when changing art styles dramatically?

Character consistency across dramatic style changes (photorealistic to anime, cartoon to oil painting) presents significant challenges but remains achievable with proper techniques. IP-Adapter demonstrates superior performance for style transfers due to CLIP-based embeddings capturing abstract geometric features rather than surface appearance. The workflow involves generating a character in the target style first, then using that stylized reference for subsequent images within the same aesthetic. OpenArt specifically optimizes for style-agnostic character consistency, maintaining identity across realistic, anime, and cartoon styles. Expect some identity drift with extreme transformations—maintaining 75-85% consistency versus 90%+ within single styles. - Q3: What training data requirements ensure optimal character consistency with LoRA?

Optimal character consistency with LoRA requires 15-30 high-quality images showing diverse angles, expressions, and poses. Quality outweighs quantity—fewer excellent images outperform large datasets with poor diversity. Effective training data includes frontal, profile, and three-quarter views; neutral, smiling, and various emotional expressions; different lighting conditions (soft, bright, dramatic); multiple poses if full-body consistency matters; consistent clothing or noted outfit variations in captions. Character sheets—single images with multiple poses—can be divided into individual training examples. Training on an RTX 4090 completes in 1-2 hours with proper datasets, while insufficient or homogeneous data produces inconsistent results regardless of training time. - Q4: How does character consistency technology handle multiple characters in single scenes?

Character consistency with multiple characters requires individual identity preservation for each person plus compositional coherence. The most reliable method trains separate LoRAs with unique trigger words, then combines them using weighted syntax in prompts. For platforms like Midjourney, concatenating multiple reference URLs with--cref URL1 URL2blends character information, though earlier URLs receive stronger influence. Advanced workflows use regional prompting or inpainting to control individual character placement. Testing shows maintaining 2-3 characters achieves 80-90% consistency success rates, while 4+ characters drop to 60-70% without careful composition planning. Professional workflows generate characters separately, then composite using image editing tools for maximum control over complex multi-character scenes.

Next Steps: Elevate Your Creative Workflow

Character consistency represents a fundamental shift in AI-assisted creative production. Whether you’re developing game assets, publishing serialized fiction, or managing brand campaigns, mastering these tools transforms your capability to produce coherent visual narratives.

Start experimenting today: Choose the platform matching your needs—Midjourney for rapid iteration, OpenArt for style versatility, or Stable Diffusion with LoRA for technical control. Generate test characters, explore consistency across scenarios, and identify which workflows serve your specific creative vision.

For more advanced techniques, practical workflows, and the latest tools transforming creative production, explore Nowadais!

Last Updated on January 25, 2026 9:05 pm by Laszlo Szabo / NowadAIs | Published on January 25, 2026 by Laszlo Szabo / NowadAIs