Last Updated on February 12, 2024 11:31 am by Laszlo Szabo / NowadAIs | Published on February 12, 2024 by Juhasz “the Mage” Gabor

Meet Boximator, Bytedance’s Video Manipulation AI Tool – Key Notes

- Boximator introduces fine-grained motion control for video synthesis.

- Developed by ByteDance Research team, led by Jiawei Wang.

- Offers two constraint types for motion control: hard boxes and soft boxes.

- Preserves base model knowledge by freezing original weights during training.

- Employs a self-tracking technique to simplify learning box-object correlations.

- Achieves state-of-the-art video quality and robust motion controllability.

- Capable of creating complex video manipulations, like changing object motions.

Boximator – Video Manipulator from Tiktok’s owner

Synthesizing videos with rich and controllable motions has always been a crucial challenge in the field of computer vision.

Today, we delve into a novel solution in AI technology, that aims to tackle this issue head-on – Boximator. This approach promises fine-grained motion control, taking video synthesis to new heights – developer and owner company of Tiktok.

The Concept Behind Boximator

Boximator is a new tool developed by a team of researchers led by Jiawei Wang. The team, affiliated with ByteDance Research, has unveiled Boximator as a plug-in for existing video diffusion models.

It introduces two constraint types: hard boxes and soft boxes.

“Boximator introduces two constraint types: hard box and soft box. Users select objects in

the conditional frame using hard boxes and then use either type of boxes to roughly or rigorously define the object’s position, shape, or motion path in future frames.”

How Boximator Works – Boximator’s Performance

Boximator’s training process preserves the base model’s knowledge by freezing the original weights and training only the control module.

To address training challenges, it introduces a novel self-tracking technique that greatly simplifies the learning of box-object correlations.

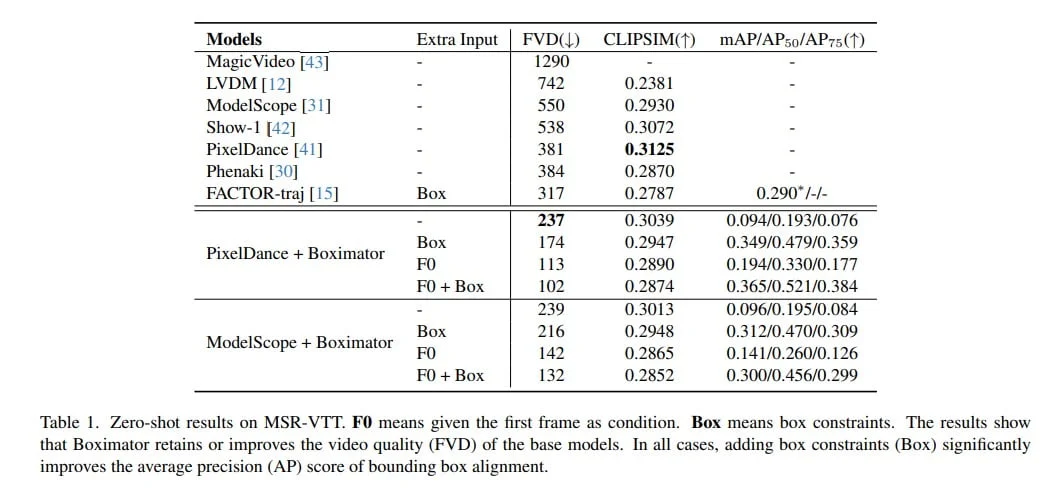

Empirically, Boximator achieves state-of-the-art video quality (FVD) scores, improving on two base models, and further enhanced after incorporating box constraints. Its robust motion controllability is validated by drastic increases in the bounding box alignment metric.

Boximator In Action

Boximator’s effectiveness can be visualized with examples.

For instance, it can manipulate a “A young woman is turning her head, revealing her face in profile” to “stand and then walk”, or have an “adorable pika turn to the camera”.

The wind can even “blow a woman’s umbrella away” on a rainy day.

Trying Out Boximator

Boximator is currently under development and will be available in the next 2-3 months. However, for users looking for an early experience, the team has prepared an experience channel. Users can try Boximator by emailing the team.

Frequently Asked Questions

- What is Boximator?

- Boximator is a novel tool developed for enhancing video synthesis with precise motion control.

- Who developed Boximator?

- Boximator was developed by a team led by Jiawei Wang, affiliated with ByteDance Research.

- What unique features does Boximator offer?

- Boximator offers hard and soft boxes for fine-grained motion control in videos.

- How does Boximator improve video synthesis?

- It preserves base model knowledge and introduces self-tracking to simplify box-object correlations, enhancing video quality and motion control.

- What kind of video manipulations can Boximator perform?

- Boximator can manipulate objects in videos to change their motion or position in highly detailed ways.