OpenAI has launched GPT-5.5-Cyber, a specialized variant of its GPT-5.5 model released just two weeks ago, in a restricted preview for vetted cybersecurity professionals. The model is designed to help defenders find and patch software vulnerabilities and analyze malware in critical infrastructure environments. Access is deliberately narrow — and the conditions attached to it are strict.

OpenAI GPT 5.5 Cyber Model Is Built for a Vetted Few

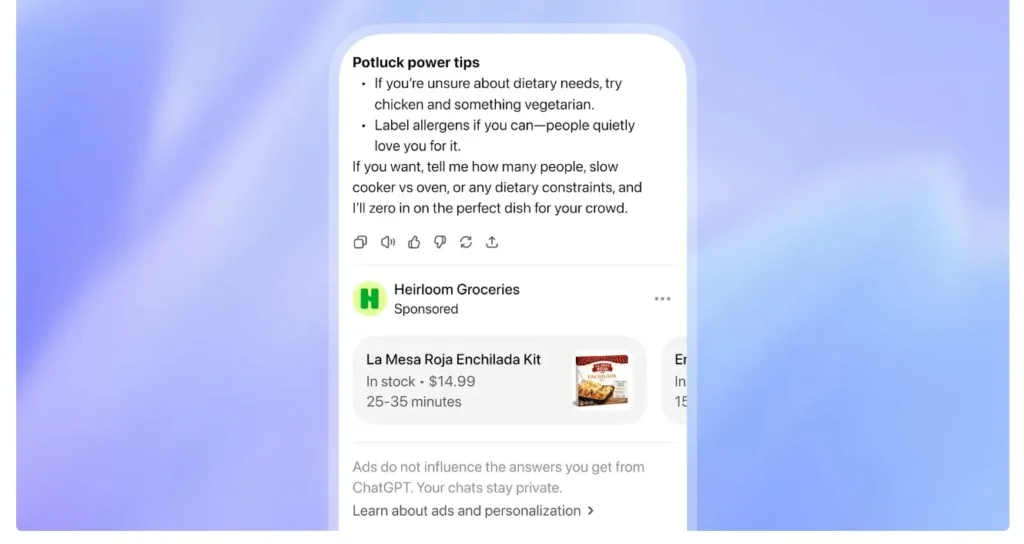

According to OpenAI, GPT-5.5-Cyber — internally referred to as “Spud” — is available only to defenders who qualify for the highest tier of the company’s Trusted Access for Cyber program. Applicants must be “responsible for securing critical infrastructure,” per OpenAI’s press release. Those who do not meet that threshold get nothing; the model is not available to the general public or to most enterprise security teams.

Defenders granted access must complete an additional requirement: installing advanced account security for ChatGPT by June 1. That deadline applies to all approved program members. The Commerce Department’s Center for AI Standards and Innovation and Congressional committees received previews of the model ahead of the public announcement.

The version made available through the program carries fewer guardrails than the publicly available GPT-5.5 model. OpenAI’s intent is to equip defenders with a tool calibrated for offensive security tasks — vulnerability discovery, malware analysis — while keeping those reduced restrictions out of unauthorized hands.

Real Capabilities, Real Risks, and Access Gaps

A source familiar with GPT-5.5-Cyber’s abilities told Axios that its performance is roughly on par with Anthropic’s Claude Mythos Preview — the current benchmark for AI-assisted vulnerability exploitation. Recent security testing reportedly confirmed that GPT-5.5 is nearly as capable as Mythos at finding and exploiting software bugs. That puts two powerful tools in the hands of defenders — but only those who can clear the vetting process.

OpenAI acknowledges the dual-use risk directly. The company has flagged that GPT-5.5-Cyber could be used by unauthorized individuals to carry out cyberattacks if access controls fail. The International Monetary Fund has separately warned that AI models of this caliber carry the potential to destabilize the world economy — a concern that underscores why the staged rollout exists at all.

The practical gap is considerable. Organizations without the resources, clearances, or institutional standing to participate in OpenAI’s Trusted Access program are excluded from a tool that could materially improve their defensive posture. Smaller utilities, regional hospitals, and mid-market financial firms — all of which operate critical infrastructure — may not qualify on the program’s current terms.

The Adversary Gap and the Industry Race

Katrina Mulligan, OpenAI’s head of national security partnerships, framed the core tension plainly: “There is tension between the need to go fast and the need to be prudent.” She continued: “We’re going to have to figure out how we do that and do it in a responsible way, because some of our adversaries are moving really fast, and they are not moving with the care and concern that we are.”

That framing positions GPT-5.5-Cyber as a response to a competitive and geopolitical clock, not simply a product launch. The White House has been holding discussions on AI cyber threats and is actively weighing how best to secure these models — including possibly requiring the federal government to vet every model before deployment.

Anthropic’s head of cyber policy, Rob Bair, offered context on the strategy behind staged releases: “The staged release was actually to create what we call defender’s advantage, and we believe that window is somewhere in the months time frame — not years.” Both companies appear to be working against the same clock — and with a short runway before that advantage erodes.

Open Questions About Oversight and What Comes Next

The White House’s concern over advanced hacking capabilities tied to AI models raises a question neither OpenAI nor Anthropic has fully answered: what happens when the defender’s advantage window closes? If adversaries reach capability parity within months, the vetting process that currently limits access to GPT-5.5-Cyber may need to evolve faster than any government review process can accommodate.

It is also unclear how OpenAI plans to expand the Trusted Access for Cyber program beyond its current elite tier. Critical infrastructure defenders who lack the institutional standing to qualify today represent a significant unserved population — and one that may be most exposed to the exact threats GPT-5.5-Cyber is designed to counter.

For now, the model exists in a narrow corridor between broad deployment and total restriction. Whether that corridor widens — and how quickly — will depend as much on regulatory decisions in Washington as on OpenAI’s own roadmap.

FAQ – Frequently Asked Questions

What are the specific requirements for installing advanced account security for ChatGPT?

To meet the requirement, users must enable multi-factor authentication, use a secure password, and configure account activity monitoring. Additionally, approved program members are advised to regularly review and update their account security settings to ensure compliance. OpenAI provides detailed guidelines on its support pages.

How will the White House’s vetting process for AI models before deployment be implemented?

The White House is expected to establish a standardized evaluation framework for assessing AI model risks and capabilities. This framework will likely involve collaboration with industry stakeholders and government agencies to develop clear guidelines and criteria for model vetting. Implementation is anticipated to occur within the next 12-18 months.

What are the potential consequences if access controls for GPT-5.5-Cyber fail?

In the event of access control failure, unauthorized users may exploit GPT-5.5-Cyber’s capabilities to launch sophisticated cyberattacks, potentially leading to significant financial losses and reputational damage for affected organizations. To mitigate this risk, OpenAI is working closely with cybersecurity experts to continuously monitor and improve the model’s access controls and security features.

Last Updated on May 9, 2026 1:05 pm by Laszlo Szabo / NowadAIs | Published on May 9, 2026 by Laszlo Szabo / NowadAIs