Last Updated on February 5, 2024 12:57 pm by Laszlo Szabo / NowadAIs | Published on February 5, 2024 by Juhasz “the Mage” Gabor

OpenAI’s Military Ban Lifted Silently – Open Doors for AI Driven Battlefields? – Key Notes:

- Policy Shift: OpenAI removes ban on military use of its AI tools.

- Military Partnerships: Potential collaboration with defense departments, including the U.S. Department of Defense.

- Ethical Concerns: Debate over AI in warfare, civilian safety, and AI biases.

- OpenAI’s Stance: Emphasis on non-harm and prohibition of weapon development.

- Future Implications: The decision impacts the role of AI in military applications and raises questions about ethical guidelines.

OpenAI’s About-Turn

OpenAI, the leading AI company, has made a significant policy shift by quietly removing its ban on military usage of its advanced AI tools – this decision has caught the attention of the Pentagon and raised questions about the potential implications and consequences.

Now we will delve into the details of OpenAI’s policy change, the concerns surrounding it, and the impact it may have on the future of AI in military applications.

The Ban on Military Usage

Until recently, OpenAI had strict guidelines in place that prohibited the use of its technology for military and warfare purposes, they used the term:

“activity that has high risk of physical harm”

The company’s policies explicitly stated that its models, such as ChatGPT, should not be used for activities that pose a high risk of physical harm, including weapons development and military applications.

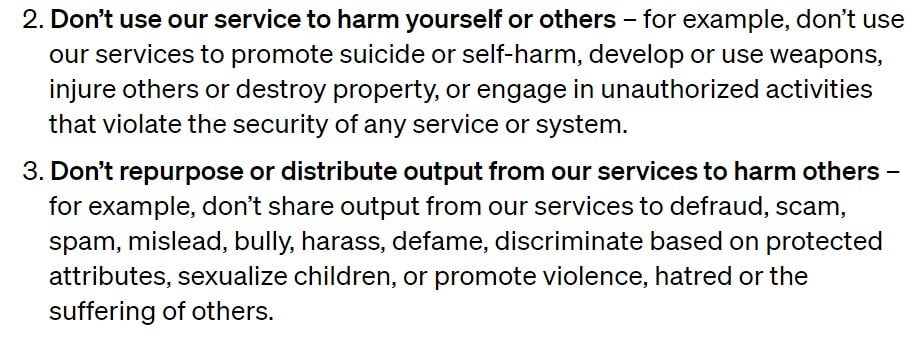

However, OpenAI has now removed the specific reference to the military from its policies, while still emphasizing the prohibition on using its tools to harm oneself or others and developing or using weapons.

The removal of the ban on military usage is seen as a significant departure from OpenAI’s previous stance and has raised concerns among experts and observers alike.

The policy change opens the door for potential partnerships between OpenAI and defense departments, including the U.S. Department of Defense, which has expressed interest in utilizing generative AI for administrative and intelligence operations.

The Implications and Criticisms

The policy change by OpenAI has sparked a debate about the ethical implications of AI in military applications.

Critics argue that the use of AI in warfare raises concerns about civilian casualties, biases in AI systems, and potential escalations of arms conflicts.

They point out that the outputs of AI models like ChatGPT, while often convincing, prioritize coherence over accuracy and can suffer from hallucinations that may lead to imprecise and biased operations.

OpenAI’s Response and Clarity

OpenAI spokesperson, Niko Felix, clarified that the policy change was intended to provide clarity and allow for discussions around national security use cases that align with OpenAI’s mission:

We aimed to create a set of universal principles that are both easy to remember and apply, especially as our tools are now globally used by everyday users who can now also build GPTs. A principle like ‘Don’t harm others’ is broad yet easily grasped and relevant in numerous contexts. Additionally, we specifically cited weapons and injury to others as clear examples.

He mentioned their collaboration with the Defense Advanced Research Projects Agency (DARPA) to develop cybersecurity tools for critical infrastructure and industry. This collaboration aims to secure open-source software that is crucial for national security.

Felix stated that OpenAI is aware of the risks and harms associated with military applications of their technology.

While the policy update removes the explicit ban on military and warfare, the principle of not causing harm to oneself or others still applies.

Any use of OpenAI’s technology, including by the military, to develop or use weapons, injure others, or engage in unauthorized activities that violate the security of any service or system, is disallowed.

The Role of AI in the Military

The military has been exploring the potential of AI for various applications.

While OpenAI’s technology may not directly enable lethal actions, it can be utilized in killing-adjacent tasks such as writing code or processing procurement orders.

For example, military personnel have already utilized custom ChatGPT-powered bots to expedite paperwork.

However, experts argue that even if OpenAI’s tools were deployed for non-violent purposes within a military institution, they would still be contributing to an organization whose primary purpose is lethality.

The involvement of AI in military intelligence, targeting systems, and decision support raises concerns about accuracy, biases, and the potential for unintended consequences.

The Need for Ethical Guidelines

The policy change by OpenAI highlights the importance of establishing clear and comprehensive ethical guidelines for AI usage, particularly in military contexts.

As AI technology continues to advance, it is crucial to address the potential risks and implications associated with its deployment in warfare.

Sarah Myers West, managing director of the AI Now Institute, emphasizes the need for transparency and enforcement in OpenAI’s policy:

Prof. Suchman questions notions of who constitutes an “imminent threat” that are baked into AI-enabled warfighting”, and the legitimacy of this targeting under the Geneva Convention.

2/

— AI Now Institute (@AINowInstitute) January 26, 2024

The vagueness of the language raises questions about how OpenAI intends to approach enforcement and whether it can effectively navigate the challenges posed by military contracting and warfighting operations.

Conclusion

OpenAI’s decision to remove the ban on military usage of its AI tools has sparked discussions about the intersection of AI and warfare.

While the company aims to provide clarity and allow for national security use cases, concerns remain about the potential consequences and ethical implications of AI in military applications.

As the field of AI continues to evolve, it is essential for organizations like OpenAI to establish robust ethical guidelines and ensure responsible development and deployment of AI technology.

Balancing the benefits and risks of AI in military contexts is a complex task that requires careful consideration and thorough evaluation of the potential impact on human lives and global security.